Hello everyone, my name is Gavin Gear, and I am going to be blogging regularly here on the Extreme Windows Blog. My background includes working on the Windows sensor team (see recent talks from BUILD HERE), and before Microsoft I graduated with a degree in Mechanical Engineering. Some of the things I enjoy include studio photography, video production, building PCs, writing apps, and inventing/fabricating/fixing. I look forward to bringing you stories where Windows powers extreme experiences, and to start I thought I’d do a quick Kinect for Windows project. Here we go!

What is Kinect for Windows?

We’ve all seen Kinect: the amazing game input device for Xbox 360 that enables controller-less console game play and control of other Xbox experiences. It’s fascinating to discover how this device uses array microphones, a projected IR dot pattern, an IR camera, and a regular RGB camera to sense the surrounding environment. With these inputs, the Kinect sensor can isolate and record sounds, generate a room depth-map, and build 3D models of human faces and skeletons. It’s safe to say that Kinect is a game-changer.

Kinect IR dot pattern as seen by modified DSLR camera

Kinect for Windows is all about enabling Windows PCs to take advantage of Kinect. The Kinect for Windows 1.5 SDK and Toolkit was released in May 2012, and includes Windows drivers for the Kinect sensor, a full SDK (Software Development Kit) a toolkit (sample code, tools), and supporting documentation.

Here’s a list of what you need to start writing Kinect apps:

- Kinect sensor*

- Windows PC with Windows 7 or later Windows OS

- Visual Studio (Express or full)

- Kinect for Windows downloads (SDK and Toolkit)

*There are actually two Kinect sensors that work with the Kinect for Windows SDK and Toolkit:

Kinect for Xbox 360 sensor (says “XBOX 360” on the front) – these devices are licensed for development purposes only, and do not support “Near Mode”. This is what I used for this blog post.

Kinect for Windows sensor (says “KINECT” on the front) – these devices are optimized for Windows experiences, are licensed for use with Windows PCs, and also support “Near Mode”.

Developers can use either sensor to get started, but deployments need to be on a Kinect for Windows sensor which can be purchased online here. If you get serious about experimenting with and integrating Kinect experiences into your projects (and I hope you do), I recommend you pick up the Kinect for Windows sensor so you have access to all of the development possibilities enabled with this pc-optimized device. However, if all you’ve got is a Kinect for Xbox kicking around your living room, break it out and give it a go!

I’ve wanted to write a Windows Kinect app for a while now. The first idea that came to mind was to experiment with human presence sensing, something I had investigated while working on the Windows sensor platform. Part of that exploration included writing an application that would control Windows experiences by means of a long-distance reflective IR proximity sensor. These reflective IR sensors have their limitations, one being that they cannot distinguish between inanimate objects (like office chairs) and humans. I had wondered how a more powerful sensor like Kinect could improve human sensing accuracy for spaces like an office. Now it’s time to find out!

App Challenge: Kinect as Human Presence Sensor

I decided to give myself a challenge: In less than a day, I would attempt to write a functioning Kinect human presence detection app using some C# code that I had previously written. This existing code did not incorporate Kinect, and I had no experience with the Kinect SDK. This would be a good challenge because in addition to writing the app, I would also need to document the entire process on video using multiple cameras. Sounds like fun to me!

I started the day with the Kinect 1.5 SDK and Toolkit installed on my Windows 7 box, and a Kinect sensor that was still in its box. I had briefly talked to the Kinect for Windows team to get ideas for how to sense human presence with Kinect. To paraphrase, they told me to “take a look at face tracking, skeletal tracking, and depth”. I had no Kinect code at this point (other than SDK samples), and no links to docs or references.

Getting Setup

From my experience with Kinect for Xbox 360, I knew that I would need about 4-6 feet of distance between myself and the sensor (however this distance is only about 400mm with a Kinect for Windows device). The first thing I did was to “mount” the sensor above and behind my monitors in an orientation where it would “look down” about 10 degrees at me when I was seated. The goal was to maximize field of view and distance while minimizing monitor obstruction.

Kinect sensor placement at my workstation

I personally think I should get extra credit for using some of the Kinect sensor packing materials (cardboard column supporting the sensor) to improvise this setup. I’ll be looking into a more solid mount for permanent use.

Once the Kinect sensor was powered up and plugged into the PC, it was time to validate that everything was working properly. I ran some of the SDK samples from the toolkit, and in a few minutes was able to run through some of the key scenarios.

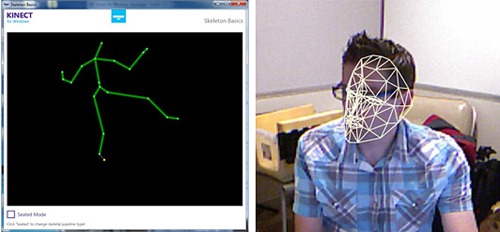

Kinect SDK Sample Screen grabs – Skeleton Basics (left, showing standing position), Face Tracking Basics (right, while seated at desk)

I spent about 10 minutes improvising the mount and running the cords, and about 5 minutes playing with the samples. So at 15 minutes into the project, I was ready to start reading docs and writing code.

Getting Into the Code

I’ll admit it- I didn’t follow the schoolbook approach of reading documentation and then writing code. Instead I started in Visual Studio, exploring the APIs with Intellisense (a code explorer tool) and reading documentation for specific items when needed. This turned out to be a time-effective way to develop such a prototype.

I ran into a problem early on when I was trying to control the elevation angle of the Kinect sensor (tilt). When I first ran my app, the following error message was displayed:

Invalid Operation Exception: “Kinect must be running to control the motor”

Wow- if only all error messages were this descriptive. This gave me the clue that I needed to “start” the sensor before using it. I added the necessary code, ran the app again, and the sensor tilted! I got a tingle of excitement from seeing my app control a piece of hardware in such a short period of time. The term “instant satisfaction” comes to mind.

Next, it was on to figuring out human presence detection with Kinect. Running the SDK samples proved to be a great way to “visualize” the kinds of data that Kinect exposes to developers. From what I saw, I decided to use skeletal tracking data since it appeared to offer good detection of human presence.

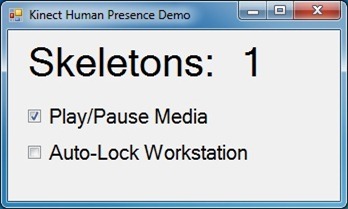

The logic I wrote to control Windows experiences is pretty simple, and is based on the number of tracked skeletons at any given point in time (humans in view of the Kinect sensor). When the number of detected humans changes, the code I wrote does the following:

Zero people: Pause media (if playing), lock workstation

One person: Play media (if not playing)

More than one person: Pause media (if playing)

The simple app UI shows a numeric display of the number of tracked skeletons (present humans), and has checkboxes to allow supported features to be toggled on/off.

It’s obvious that this app’s UI won’t win any design awards, but it’s enough to drive these simple demos. With the UI in place it was time to test out media play/pause. With my app running, I started an mp3 playing in Windows Media Player, enabled the play/pause feature, and then walked out of my office. The music stopped. When I entered and sat down, the music started playing again. This is just too fun. Following that, it was a simple matter to add the “Lock workstation” feature. Having spent about 90 minutes writing code, reading documentation, searching the internet, and doing basic testing, I was now at 1:45 for total project time. Later, when my friend stopped by I was able to test the “pause my media when another person enters my office” feature, cool.

Here’s a video that I put together during the project to show you how things unfolded:

Wrap-up

When I started this project, I was not sure exactly what to expect. What I discovered is that Kinect for Windows provides developers with a comprehensive set of tools, samples, and documentation and the APIs are also easy to use. The next thing I’m going to do with Kinect is to get one of the Kinect for Windows sensors so that I can test my app with “Near Mode”. I can’t wait to see more of what developers will do with Kinect for Windows, and I have my own ideas for additional projects.

Resources:

www.kinectforwindows.com

Kinect for Windows Downloads

Kinect for Windows Hardware

You can follow me on twitter here: @GavinGear