The way users interact with apps on different devices has gotten much more personal lately, thanks to a variety of new Natural User Interface features in the Universal Windows Platform. These UWP patterns and APIs are available for developers to easily bring in capabilities for their apps that enable more human technologies. For the final blog post in the series, we have extended the Adventure Works sample to add support for Ink on devices that support it, and to add support for speech interaction where it makes sense (including both synthesis and recognition). Make sure to get the updated code for the Adventure Works Sample from the GitHub repository so you can refer to it as you read on.

And in case you missed the blog post from last week on how to enable great social experiences, we covered how to connect your app to social networks such as Facebook and Twitter, how to enable second screen experiences through Project “Rome”, and how to take advantage of the UWP Maps control and make your app location aware. To read last week’s blog post or any of the other blog posts in the series, or to watch the recordings from the App Dev on Xbox live event that started it all, visit the App Dev on Xbox landing page.

Adventure Works (v3)

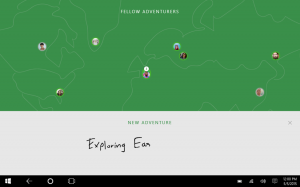

We are continuing to build on top of the Adventure Works sample app we worked with in the previous two blog posts. If you missed those, make sure to check them out here and here. As a reminder, Adventure Works is a social photo app that allows the user to:

- Capture, edit, and store photos for a specific trip

- auto analyze and auto tag friends using Cognitive Services vision APIs

- view albums from friends on an interactive map

- share albums on social networks like Facebook and Twitter

- Use one device to remote control slideshows running on another device using project Rome

- and more …

There is always more to be done, and for this final round of improvements we will focus on two sets of features:

- Ink support to annotate images, enable natural text input, as well as the ability to use inking as a presentation tool in connected slideshow mode.

- Speech Synthesis and Speech Recognition (with a little help from cognitive services for language understanding) to create a way to quickly access information using speech.

More Personal Computing with Ink

Inking in Windows 10 allows users with Inking capable devices to draw and annotate directly on the screen with a device like the Surface Pen – and if you don’t have a pen handy, you can use your finger or a mouse instead. Windows 10 built-in apps like Sticky Notes, Sketchpad and Screen sketch support inking, as do many Office products. Besides preserving drawings and annotations, inking also uses machine learning to recognize and convert ink to text. OneNote goes a step further by recognizing shapes and equations in addition to text.

Best of all, you can easily add Inking functionality into your own apps, as we did for Adventure Works, with one line of XAML markup to create an InkCanvas. With just one more line, you can add an InkToolbar to your canvas that provides a color selector as well as buttons for drawing, erasing, highlighting, and displaying a ruler. (In case you have the Adventure Works project open, the InkCanvas and InkToolbar implementation can be found in PhotoPreviewView.)

[code lang=”xml”]

<InkCanvas x:Name=”Inker”></InkCanvas>

<InkToolbar TargetInkCanvas=”{x:Bind Inker}” VerticalAlignment=”Top”/>

[/code]

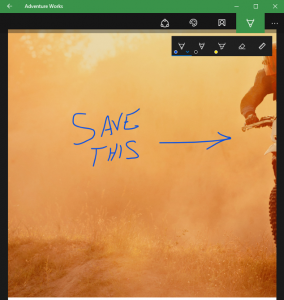

The InkCanvas allows users to annotate their Adventure Works slideshow photos. This can be done both directly as well as remotely through the Project “Rome” code highlighted in the previous post. When done on the same device, the ink strokes are saved off to a GIF file which is then associated with the original slideshow image.

When the image is displayed again during later viewings, the strokes are extracted from the GIF file, as shown in the code below, and inserted back into a canvas layered on top of the image in PhotoPreviewView. The code for saving and extracting ink strokes are found in the InkHelpers class.

[code lang=”csharp”]

var file = await StorageFile.GetFileFromPathAsync(filename);

if (file != null)

{

using (var stream = await file.OpenReadAsync())

{

inker.InkPresenter.StrokeContainer.Clear();

await inker.InkPresenter.StrokeContainer.LoadAsync(stream);

}

}

[/code]

Ink strokes can also be drawn on one device (like a Surface device) and displayed on another one (an Xbox One). In order to do this, the Adventure Works code actually collects the user’s pen strokes using the underlying InkPresenter object that powers the InkCanvas. It then converts the strokes into a byte array and serializes them over to the remote instance of the app. You can find out more about how this is implemented in Adventure Works by looking through the GetStrokeData method in SlideshowSlideView control and the SendStrokeUpdates method in SlideshowClientPage.

It is sometimes useful to save the ink strokes and original image in a new file. In Adventure Works, this is done to create a thumbnail version of an annotated slide for quick display as well as for uploading to Facebook. You can find the code used to combine an image file with an ink stroke annotation in the RenderImageWithInkToFIleAsync method in the InkHelpers class. It uses the Win2D DrawImage and DrawInk methods of a CanvasDrawingSession object to blend the two together, as shown in the snippet below.

[code lang=”csharp”]

CanvasDevice device = CanvasDevice.GetSharedDevice();

CanvasRenderTarget renderTarget = new CanvasRenderTarget(device, (int)inker.ActualWidth, (int)inker.ActualHeight, 96);

var image = await CanvasBitmap.LoadAsync(device, imageStream);

using (var ds = renderTarget.CreateDrawingSession())

{

var imageBounds = image.GetBounds(device);

//…

ds.Clear(Colors.White);

ds.DrawImage(image, new Rect(0, 0, inker.ActualWidth, inker.ActualWidth), imageBounds);

ds.DrawInk(inker.InkPresenter.StrokeContainer.GetStrokes());

}

[/code]

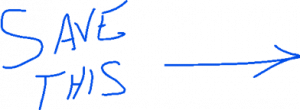

Ink Text Recognition

Adventure Works also takes advantage of Inking’s text recognition feature to let users handwrite the name of their newly created Adventures. This capability is extremely useful if someone is running your app in tablet mode with a pen and doesn’t want to bother with the onscreen keyboard. Converting ink to text relies on the InkRecognizer class. Adventure Works encapsulates this functionality in a templated control called InkOverlay which you can reuse in your own code. The core implementation of ink to text really just requires instantiating an InkRecognizerContainer and then calling its RecognizeAsync method.

[code]

var inkRecognizer = new InkRecognizerContainer();

var recognitionResults = await inkRecognizer.RecognizeAsync(_inker.InkPresenter.StrokeContainer, InkRecognitionTarget.All);

[/code]

You can imagine this being very powerful when the user has a large form to fill out on a tablet device and they don’t have to use the onscreen keyboard.

More Personal Computing with Speech

There are two sets of APIs that are used in Adventure Works that enable a great natural experience using speech. First, UWP speech APIs allow developers to integrate speech-to-text (recognition) and text-to-speech (synthesis) into their UWP apps. Speech recognition converts words spoken by the user into text for form input, for text dictation, to specify an action or command, and to accomplish tasks. Both free-text dictation and custom grammars authored using Speech Recognition Grammar Specification are supported.

Second, Language Understanding Intelligent Service (LUIS) is a Microsoft Cognitive Services API that uses machine learning to help your app figure out what people are trying to say. For instance, if someone wants to order food, they might say “find me a restaurant” or “I’m hungry” or “feed me”. You might try a brute force approach to recognize the intent to order food, listing out all the variations on the concept “order food” that you can think of – but of course you’re going to come up short. LUIS lets you set up a model for the “order food” intent that learns, over time, what people are trying to say.

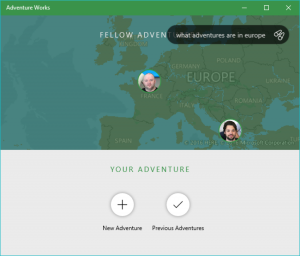

In Adventure Works, these features are combined to create a variety of speech related functionalities. For instance, the app can listen for an utterance like “Adventure Works, start my latest slideshow” and it will naturally open a slideshow for you when it hears this command. It can also respond using speech when appropriate to answer a question. LUIS, in turn, augments this speech recognition with language understanding to improve the recognition of natural language phrases.

The speech capabilities for our app are wrapped in a simple assistant called Adventure Works Aide (look for AdventureWorksAideView.xaml). Saying the phrase “Adventure Works…” will invoke it. It will then listen for spoken patterns such as:

- “What adventures are in <location>.”

- “Show me <person> adventure.”

- “Who is closes to me.”

Adventure Works Aide is powered by a custom SpeechService class. There are two SpeechRecognizer instances that are used at different times, first to recognize the “Adventure Works” phrase at any time:

[code lang=”csharp”]

_continousSpeechRecognizer = new SpeechRecognizer();

_continousSpeechRecognizer.Constraints.Add(new SpeechRecognitionListConstraint(new List<String>() { "Adventure Works" }, "start"));

var result = await _continousSpeechRecognizer.CompileConstraintsAsync();

//…

await _continousSpeechRecognizer.ContinuousRecognitionSession.StartAsync(SpeechContinuousRecognitionMode.Default);

and then to understand free form natural language and convert it to text:

_speechRecognizer = new SpeechRecognizer();

var result = await _speechRecognizer.CompileConstraintsAsync();

SpeechRecognitionResult speechRecognitionResult = await _speechRecognizer.RecognizeAsync();

if (speechRecognitionResult.Status == SpeechRecognitionResultStatus.Success)

{

string str = speechRecognitionResult.Text;

}

[/code]

As you can see, the SpeechRecognizer API is used for both listening continuously for specific constraints throughout the lifetime of the app, or to convert any free-form speech to text at a specific time. The continuous recognition session can be set to recognize phrases from a list of strings, or it can even use a more structured SRGS grammar file which provides the greatest control over the speech recognition by allowing for multiple semantic meanings to be recognized at once. However, because we want to understand every variation the user might say and use LUIS for our semantic understanding, we can use the free-form speech recognition with the default constraints.

Note: before using any of the speech APIs on Xbox, the user must give permission to your application to access the microphone. Not all APIs automatically show the dialog currently so you will need to invoke the dialog yourself. Checkout the CheckForMicrophonePermission function in SpeechService.cs to see how this is done in Adventure Works.

When the continuous speech recognizer recognizes the key phrase, it immediately stops listening, shows the UI for the AdventureWorksAide to let the user know that it’s listening, and starts listening for natural language.

[code]

await _continousSpeechRecognizer.ContinuousRecognitionSession.CancelAsync();

ShowUI();

SpeakAsync("hey!");

var spokenText = await ListenForText();

[/code]

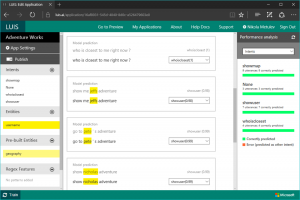

Subsequent utterances are passed on to LUIS which uses training data we have provided to create a machine learning model to identify specific intents. For this app, we have three different intents that can be recognized: showuser, showmap, and whoisclosest (but you can always add more). We have also defined an entity for username for LUIS to provide us with the name of the user when the showuser intent has been recognized. LUIS also provides several pre-built entities that have been trained for specific types of data; in this case, we are using an entity for geography locations in the showmap intent.

To use LUIS in the app, we used the official nugget library which allowed us to register specific handlers for each intent when we send over a phrase.

[code]

var handlers = new LUISIntentHandlers();

_router = IntentRouter.Setup(Keys.LUISAppId, Keys.LUISAzureSubscriptionKey, handlers, false);

var handled = await _router.Route(text, null);

[/code]

Take a look at the HandleIntent method in the LUISAPI.cs file and the LUISIntentHandlers class which handles each intent defined in the LUIS portal, and is a useful reference for future LUIS implementations.

Finally, once the text has been processed by LUIS and the intent has been processed by the app, the AdventureWorksAide might need to respond back to the user using speech, and for that, the SpeechService uses the SpeechSynthesizer API:

[code]

_speechSynthesizer = new SpeechSynthesizer();

var syntStream = await _speechSynthesizer.SynthesizeTextToStreamAsync(toSpeak);

_player = new MediaPlayer();

_player.Source = MediaSource.CreateFromStream(syntStream, syntStream.ContentType);

_player.Play();

[/code]

The SpeechSynthesizer API can specify a specific voice to use for the generation based on voices installed on the system, and it can even use SSML (speech synthesis markup language) to control how the speech is generated, including volume, pronunciation, and pitch.

The entire flow, from invoking the Adventure Works Aide to sending the spoken text to LUIS, and finally responding to the user is handled in the WakeUpAndListen method.

There’s more

Though not used in the current version of the project, there are other APIs that you can take advantage of for your apps, both as part of the UWP platform and as part of Cognitive Services.

For example, on desktop and mobile device, Cortana can recognize speech or text directly from the Cortana canvas and activate your app or initiate an action on behalf of your app. It can also expose actions to the user based on insights about them, and with user permission it can even complete the action for them. Using a Voice Command Definition (VCD) file, developers have the option to add commands directly to the Cortana command set (commands like: “Hey Cortana show adventure in Europe in Adventure Works”). Cortana app integration is also part of our long-term plans for voice support on Xbox, even though it is not supported today. Visit the Cortana portal for more info.

In addition, there are several speech and language related Cognitive Services APIs that are simply too cool not to mention:

- Custom Recognition Service – Overcomes speech recognition barriers like speaking style, background noise, and vocabulary.

- Speaker Recognition – Identify individual speakers or use speech as a means of authentication with the Speaker Recognition API.

- Linguistic Analysis – Simplify complex language concepts and parse text with the Linguistic Analysis API.

- Translator – Translate speech and text with a simple REST API call.

- Bing Spell Check – Detect and correct spelling mistakes within your app.

The more personal computing features provided through Cognitive Services is constantly being refreshed, so be sure to check back often to see what new machine learning capabilities have been made available to you.

That’s all folks

This was the last blog post (and sample app) in the App Dev on Xbox series, but if you have a great idea that we should cover, please just let us know, we are always looking for cool app ideas to build and features to implement. Make sure to check out the app source on our official GitHub repository, read through some of the resources provided, read through some of the other blog posts or watch the event if you missed it, and let us know what you think through the comments below or on twitter.

Happy coding!

Resources

- Pen Interactions and Windows Ink in UWP apps

- Speech Recognition

- FamilyNotes: Introducing a Windows UWP sample using Ink, Speech and Facial Recognition

- The Ink Canvas and Ruler: combining art and technology

- Recognize Windows Ink strokes as Text

- Using Speech in your UWP apps: Look Who’s Talking

- CortanaVoiceCommand sample

- Inking sample

Previous Xbox Series Posts

- Tailoring your app for Xbox and the TV

- Unity interop and app extensibility

- Background audio and cross platform development with Xamarin

- UWP hosted web app on Xbox One

- Internet of Things on the Xbox

- Camera APIs with a dash of cloud intelligence in a UWP app

- Going social: Project Rome, Maps and social integration