This post was written by Tõnu Vanatalu, Senior Lead Program Manager, Developer Engagement at Microsoft

Windows Runtime APIs expose many functions to deal with playing and recording audio for both Windows Store apps and Windows Phone apps (starting with Windows Phone 8). These APIs handle all complexities like audio format conversion, different sample rates, and different hardware capabilities.

There are, however, some scenarios where you want to render or capture sample by sample. Fortunately, Windows has a low level audio access component called WASAPI–the Windows Audio Session API–which enables organized access to low level audio functionality for desktop applications, Windows Store apps, and Windows Phone apps alike. The API is based on COM, which means either writing your app entirely in C++or writing a wrapper Windows Runtime Component that can be accessed from Windows apps written in other languages.

This post describes how to write Windows Store applications using WASAPI. While the examples and text are specifically meant for Windows, the APIs used will all compile under Windows Phone projects. Thus, with some modifications it will be possible to build a Windows Phone app.

WSAPI vs. XAudio2

Leaving aside media APIs that are exposed as part of WinRT, there are really two sets of APIs available to Windows apps for low level audio access–WASAPI and XAudio2. XAudio2 is purposely built to provide signal processing and mixing foundation for developing high performance audio engines for games. In terms of architecture, WASAPI is a lower level API and will provide the low level access to the hardware and audio engine. While in XAudio2 you will deal with sound source objects (PC audio data) which you can instruct to play or loop through different mixer configurations, WASAPI allows you to receive notifications when capture data is available or render data is needed. It does not get much lower level than this. However the flipside is that you will need to provide your own logic to mix sounds, track positions, and scheduling. This is the price you pay to get maximum control. In this posting we exclusively focus on WASAPI usage.

Activation

All interaction with WASAPI starts from the IAudioClient interface (or IAudioClient2, which contains additional methods to control audio hardware offloading and setting stream options). Instead of creating it, you “activate” it and then initialize it. Once initialized you can then either start or stop the stream. There is no way back though–you cannot undo initialization, you have to release the interface and activate a new one.

There are two types of streams that underlie IAudioClient: capture and render streams. The type of stream is largely defined by the type of device whose ID is passed on to activation. There is one special case though: you can open a capture stream on a render device. This will allow you to capture the bytes as they are rendered and is called a loopback stream. You can find more on this at Loopback Recording.

To get the pointer to WASAPI interface, call ActivateAudioInterfaceAsync with the device ID from either MediaDevice.GetDefaultAudioCaptureId or GetDefaultAudioRenderId if you are happy with the default audio device. You can alternately enumerate the available devices using DeviceInformation.FindAllAsync with DeviceClass.AudioCapture or DeviceClass.AudioRender and then pass the Id property of the item in the returned collection to ActivateAudioInterfaceAsync.

Mixing Windows Runtime classes and COM class implementations has some challenges, so it’s practical to create a wrapper class to implement the async callback. In this sample, the most important part of the wrapper class is a static async method that returns task<ComPtr<IAudioClient2>>. This method calls the class’s private constructor, then calls ActivateAudioInterfaceAsync (passing the calls as async callback) and then waits for the class’s task completion event to be set. The async callback extracts the audio client interface from the callback result and sets the completion event. Please note that the base class has to derive from or implement free-threaded marshaling, otherwise ActivateAudioInterfaceAsync will fail with E_ILLEGAL_METHOD_CALL.

A simplified version of the activator class is described below:

C++

class CAudioInterfaceActivator : public RuntimeClass< RuntimeClassFlags< ClassicCom >, FtmBase, IActivateAudioInterfaceCompletionHandler >

{

task_completion_event<ComPtr<IAudioClient2>> m_ActivateCompleted;

STDMETHODIMP ActivateCompleted(IActivateAudioInterfaceAsyncOperation *pAsyncOp)

{

ComPtr<IAudioClient2> audioClient;

// ... extract IAudioClient2 from pAsyncOp into audioClient

m_ActivateCompleted.set(audioClient);

return S_OK;

}

public:

static task<ComPtr<IAudioClient2>> ActivateAsync(LPCWCHAR pszDeviceId)

{

...

HRESULT hr = ActivateAudioInterfaceAsync(

pszDeviceId

__uuidof(IAudioClient2),

nullptr,

pHandler.Get(),

&pAsyncOp);

return create_task(pActivator->m_ActivateCompleted);

}

};

Getting parameters

Before the initialization of the capture or render streams, we need to decide a few things: what is the audio format going to be, what is the buffer size, and are we going to use hardware offload and possibly a raw stream?

While most of the methods of IAudioClient2 cannot be called before the stream is initialized, some of them can. Determining what audio formats we can use is a little tricky because WASAPI lacks methods to obtain a list of supported audio formats. Instead there is a method called IsFormatSupported. This makes it possible to build this list by enumerating over all possible sample rates and bit depths to get the formats.

Calling IsFormatSupported needs a WAVEFORMATEXTENSIBLE structure. If using shared mode, IsFormatSupported can suggest a closest match to the requested audio format. There is also a method called GetMixFormat which retrieves the stream format that the audio engine uses for its internal processing of shared-mode streams.

For PCM encoding and stereo only you can use this code to build the WAVEFORMATEXTENSIBLE structure:

BOOL SupportsFormat(ComPtr<IAudioClient2> audioClient,unsigned samplesPerSecond, unsigned bitsPerSample)

{

WAVEFORMATEXTENSIBLE waveFormat;

waveFormat.Format.cbSize = sizeof(WAVEFORMATEXTENSIBLE) - sizeof(WAVEFORMATEX);

waveFormat.Format.wFormatTag = WAVE_FORMAT_EXTENSIBLE;

waveFormat.Format.nSamplesPerSec = samplesPerSecond;

waveFormat.Format.nChannels = 2;

waveFormat.Format.wBitsPerSample = bitsPerSample;

waveFormat.Format.nBlockAlign = waveFormat.Format.nChannels * (waveFormat.Format.wBitsPerSample / 8);

waveFormat.Format.nAvgBytesPerSec = m_WaveFormat.Format.nBlockAlign * samplesPerSecond;

waveFormat.Samples.wValidBitsPerSample = bitsPerSample;

waveFormat.dwChannelMask = SPEAKER_FRONT_LEFT | SPEAKER_FRONT_RIGHT; // Just support stereo at this stage

waveFormat.SubFormat = BitsPerSample == 32 ? KSDATAFORMAT_SUBTYPE_IEEE_FLOAT : KSDATAFORMAT_SUBTYPE_PCM;

return audioClient->IsFormatSupported(AUDCLNT_SHAREMODE_EXCLUSIVE, reinterpret_cast<WAVEFORMATEX *>(&waveFormat) ,NULL) == S_OK;

}

As explained below, if requesting a shared mode stream, you can retrieve the format of the audio engine that is used internally by calling IAudioClient::GetMixFormat. Usually the audio engine format is using float type sample values and the sample rate is related to the set default audio format in the control panel and also requested stream properties. The preferred way of setting the audio format is to call IAudioClient::SetClientProperties with the appropriate properties and then call IsFormatSupported with the format of the source they already have (i.e. file source, or the format that the application is generating data in). If the above fails, call IAudioClient::SetClientProperties with the appropriate properties and then call GetMixFormat to determine the sample rate.

Windows 8.1 introduces the possibility to request a “raw” audio stream. The audio hardware and drivers sometimes already contain digital signal processing on the signal path for echo cancellation, bass boost, headphone virtualization, loudness equalization, etc. Requesting a raw stream essentially means bypassing these effects (please note there is some processing that cannot be bypassed like the limiter). You can test for the capability to request a raw stream by testing the System.Devices.AudioDevice.RawProcessingSupported property key of the device.

C++

static Platform::String ^PropKey_RawProcessingSupported = L"System.Devices.AudioDevice.RawProcessingSupported";

task<bool> AudioFX::QuerySupportsRawStreamAsync(Platform::String ^deviceId)

{

Platform::Collections::Vector<Platform::String ^> ^properties = ref new Platform::Collections::Vector<Platform::String ^>();

properties->Append(PropKey_RawProcessingSupported);

return create_task(Windows::Devices::Enumeration::DeviceInformation::CreateFromIdAsync(deviceId, properties)).then(

[](Windows::Devices::Enumeration::DeviceInformation ^device)

{

bool bIsSupported = false;

if (device->Properties->HasKey(PropKey_RawProcessingSupported) == true)

{

bIsSupported = safe_cast<bool>(device->Properties->Lookup(PropKey_RawProcessingSupported));

}

return bIsSupported;

});

}

If you want to opt into hardware offloading on platforms that support this, use IAudioClient2::IsOffloadCapable to test if offloading is available and IAudioClient2::GetBufferSizeLimits to obtain the allowable buffer lengths. Only render stream categories set to AudioCategory_BackgroundCapableMedia or AudioCategory_ForegroundOnlyMedia support offloading. Hardware offloading enables moving audio frames from memory hardware device without the involvement of CPU, thus achieving battery life savings, however the hardware offload is generally for low-power and not for a low-latency scenario. There is additional bookkeeping to be done when using an offload stream, so unless absolutely necessary, offload should generally not be used for low latency.

Both raw processing and hardware offload can be requested by calling IAudioClient2::SetClientProperties before initializing the

C++

// Set the render client options

AudioClientProperties renderProperties = {

sizeof(AudioClientProperties),

true, // Request hardware offload

AudioCategory_Foreground,

useRawStreamForCapture ? AUDCLNT_STREAMOPTIONS_RAW : AUDCLNT_STREAMOPTIONS_NONE // Request raw stream if supported

};

HRESULT hr = m_RenderClient->SetClientProperties(&renderProperties);

Initialization

Initializing the audio client will put the stream into a state where it can be started and stopped, thus making it ready for operation. Please note that there is no functionality to “uninitialize” or “reinitialize” IAudioClient2. Again, the only way to accomplish this is to release the audio interface by calling IAudioClient2->Release()or the equivalent if using smart pointer wrappers.

The following sections explain the arguments required by IAudioClient::Initialize in more detail.

Client Modes

You can initialize the WASAPI audio client in either two modes: shared mode or exclusive mode. When opening the stream in shared mode, your application will interface with the audio engine component which is capable of working with several apps at the same time and thus letting all the applications function. This is the preferred mode for the streams because the big drawback of exclusive mode is denying access to other running apps and processes to audio hardware. In exclusive mode, you’d be interacting with the driver directly and would be totally dependent on the formats that the driver supports. You would also need to do additional bookkeeping around app suspension–hence the use of exclusive mode is strongly not recommended.

Stream Flags

The most interesting flag in the arguments to Initialize is AUDCLNT_STREAMFLAGS_EVENTCALLBACK, which indicates that the event callback flag will enable your application to receive callback notifications when capture data is available or new render data is requested at the end of each audio engine processing pass. The benefit of using event mode is that your code does not need to schedule wait loops and gets called only when data handling is needed. If you are opening the audio stream in a non-event mode, then your app is responsible for regular monitoring of buffer state and scheduling reads and writes as appropriate.

Buffer size and Periodicity

For simplicity, the buffer size and periodicity arguments to Initializeshould be the same. This means that each audio engine pass processes one buffer length (which is required for event driven mode anyway).

Requesting buffer lengths is not a simple affair. You request values and the system tries to find the closest matching values. For shared mode you should pass in zero for the periodicity value (you can retrieve the periodicity value used by the audio engine from IAudioClient::GetDevicePeriod). In exclusive mode passing any value below what’s returned from GetDevicePeriod will return AUDCLNT_E_BUFFER_SIZE_ERROR. Thus to get the smallest buffer size, pass the minimum value returned from GetDevicePeriod. Buffer size and periodicity are both REFERENCE_TIME types (100ns units), but the data that you read or write are expressed as frames (that is, one audio sample). It might be that the time specified corresponds to a fractional frame count. If that happens, AUDCLNT_E_BUFFER_SIZE_NOT_ALIGNED error value is returned and the app should call IAudioClient::GetBufferSize (which returns the frame count of the buffer), calculate corresponding period length, and call Initializeagain. Here’s how to do that:

C++

HRESULT hr = audioClient->Initialize(

mode,

streamFlags,

bufferSize,

bufferSize,

pFormat,

NULL);

if (hr == AUDCLNT_E_BUFFER_SIZE_NOT_ALIGNED)

{

UINT32 nFrames = 0;

hr = audioClient->GetBufferSize(&nFrames);

// Calculate period that would equal to the duration of proposed buffer size

REFERENCE_TIME alignedBufferSize = (REFERENCE_TIME) (1e7 * double(nFrames) / double(pFormat->nSamplesPerSec) + 0.5);

hr = audioClient->Initialize(

mode,

streamFlags,

alignedBufferSize,

alignedBufferSize,

pFormat,

NULL);

}

Audio format

You need to pass in a WAVEFORMATEX structure pointer that contains the information about the audio format requested. Make sure the audio format is supported otherwise AUDCLNT_E_UNSUPPORTED_FORMAT is returned and initialization fails.

Consent prompt

Windows apps need to declare their intent to use audio capture devices (the Microsoft capability) in the app manifest in order to call IAudioClient::Initialize. The first time this is called, Windows will prompt the user for their consent with a message dialog. Note that contrary to documentation on MSDN, the call to Initialize cannot happen on a UI thread, otherwise the UI will lock up. So always specify use_arbitrary() for continuation or task context that wraps the initialize call:

C++

AudioInterfaceActivator::ActivateAudioClientAsync(deviceId).then(

[this](ComPtr<IAudioClient2> audioClient)

{

// ...

hr = audioClient->Initialize(

AUDCLNT_SHAREMODE_SHARED,

AUDCLNT_STREAMFLAGS_EVENTCALLBACK,

bufferSize,

bufferSize,

pWaveFormat),

NULL);

// ...

}, task_continuation_context::use_arbitrary());

If the user denies consent, then IAudioClient::Initialize returns E_ACCESS_DENIED; the user will have to later go to the Settings > Permissions pane to enable the device. Remember that the user can take as long as they want to reply to the consent prompt, so expose the initialization operation as an async action.

C++

Windows::Foundation::IAsyncAction ^AudioFX::InitializeAsync()

{

return create_async(

[this]() ->task<void>

{

// ...

Scheduling

Scheduling is perhaps the trickiest part in running real-time audio. Fortunately there is a special component in Windows called Multimedia Class Scheduler service (MMCSS). This service allows you to execute time-critical multimedia threads at high priority while making sure the CPU isn’t overloaded. Most of the functionality of the MMCSS is not directly accessible from Windows apps–one has to use Media Foundation work queues, which can make use of the MMCSS and conveniently offer multithreaded task scheduling.

Media foundation asynchronous callbacks are executed through the IMFAsyncResultinterface. As with the activation callback interface, a convenient way to implement that would be to wrap it inside a class.

C++

template<class T>

class CAsyncCallback : public RuntimeClass< RuntimeClassFlags< ClassicCom >, IMFAsyncCallback >

{

DWORD m_dwQueueId;

public:

typedef HRESULT(T::*InvokeFn)(IMFAsyncResult *pAsyncResult);

CAsyncCallback(T ^pParent, InvokeFn fn) : m_pParent(pParent), m_pInvokeFn(fn), m_QueueId(0)

{

}

// IMFAsyncCallback methods

STDMETHODIMP GetParameters(DWORD *pdwFlags, DWORD *pdwQueue)

{

*pdwQueue = m_dwQueueId;

*pdwFlags = 0;

return S_OK;

}

STDMETHODIMP Invoke(IMFAsyncResult* pAsyncResult)

{

return (m_pParent->*m_pInvokeFn)(pAsyncResult);

}

void SetQueueID(DWORD queueId)

{

m_QueueId = queueId;

}

T ^m_pParent;

InvokeFn m_pInvokeFn;

};

The wrapper class implements two methods: one for callback (Invoke) and the other one which is used to retrieve the queue ID to schedule the callback on the right work queue. You should set the queue value before scheduling any callbacks.

To set up Media Foundation work queues, you first call MFLockSharedWorkQueue with an MMCSS task class name: “Pro Audio” is the one that will guarantee highest real time priority for the thread. This method then returns an identifier to the correct queue, and you must make sure that your implementation of IMFAsyncCallback::GetParameters returns this queue ID.

To schedule a task that is run when an event is set, you can use MFPutWaitingWorkItem or immediately through MFPutWorkItemEx2. You can cancel a pending work item by calling MFCancelWorkItem.

C++

public ref class CAudioClient sealed

{

CAsyncCallback<AudioClient> m_Callback;

HRESULT OnCallback(IMFAsyncResult *);

ComPtr<IMFAsyncResult> m_CallbackResult;

MFWORKITEM_KEY m_CallbackKey;

HANDLE hCallbackEvent;

public:

CAudioClient() : m_Callback(this, &CAudioClient::OnCallback)

{

// IMFAsyncCallback methods

// Set up work queues and callback

DWORD dwTaskId = 0, dwMFProAudioWorkQueueId = 0;

HRESULT hr = MFLockSharedWorkQueue(L"Pro Audio", 0, &dwTaskId, &dwMFProAudioWorkQueueId);

m_Callback.SetQueueID(dwMFProAudioWorkQueueId);

hr = MFCreateAsyncResult(nullptr, &m_Callback, nullptr, &m_CallbackResult);

// ...

// Wait for the event and then execute callback at realtime priority

hr = MFPutWaitingWorkItem(m_hCallbackEvent, 0, m_CallbackResult.Get(), &m_CallbackKey);

// ...

// Cancel the pending callback

hr = MFCancelWorkItem(m_CallbackKey);

Scheduling functions are expecting an IMFAsyncResult pointer–you can create IMFAsyncResult objects by calling MFCreateAsyncResult. If you are not using the object and state properties of the IMFAsyncResult, it is practical to reuse the object for successive callbacks in your class.

Capture and Rendering

There are few other things that the app might need to set up before it’s ready to start the streaming. If you have set up event-based streaming you will need to create the kernel event object through CreateEvent and register this with the audio client by calling IAudioClient::SetEventHandle. You should then use MFPutWaitingWorkItem to schedule callback when the audio data is available (capture stream) or requested (render stream) when starting the stream.

To get audio frames from capture device buffer or write audio frames to render device buffer you will need IAudioCapturClient or IAudioRenderClient interfaces respectively. You can obtain these and other interfaces for the audio client by calling IAudioClient->GetService.

C++

ComPtr<IAudioClock> renderClock;

ComPtr<IAudioRenderClient> renderClient;

hr = m_RenderClient->GetService(__uuidof(IAudioRenderClient), &renderClient);

hr = m_RenderClient->GetService(__uuidof(IAudioClock), &renderClock);

Your app might also want to pre-allocate memory for audio buffers as part of setup before starting the stream.

Starting the stream is as simple as calling IAudioClient::Start and to stop the stream you should call IAudioClient::Stop. Both Start and Stop take few milliseconds to execute, thus there is no specific need to implement them as async functions.

Capture

After you start the streams, capture streams will start to gather audio data and render streams will start to render. Whenever the capture buffer is filled for an event-based stream, the event is set and the preset callback is executed by the MF work queue’s component. Using a non-event-based stream for capture is really not practical nor efficient as you would need to run a loop to check for available frames in the buffer–in this post we will be looking just at event-based capture stream.

Be mindful to spend as little time in the callback as necessary. Your code will be running at real-time priority and calling any functionality that can block the thread can cause glitches in audio and holdups in processing. Therefore it would be best just to include minimal flow logic and just copy data to pre-allocated buffers.

Getting the data is as easy as calling IAudioCaptureClient::GetBuffer and IAudioCaptureClient::ReleaseBuffer. As you cannot call another GetBuffer before calling ReleaseBuffer you should take care of the section of code not executing on multiple threads at the same time. One way would be to wrap the capture handling code inside a critical section block which will prevent a context switch from happening inside the capture code. However, as long as you do call MFPutWaitingWorkItem to schedule another callback after calling ReleaseBuffer, other synchronization might not be necessary. Once you have copied the capture frames, be sure to schedule a wait for the next audio event by calling the MFPutWaitingWorkItem.

C++

UINT64 u64DevicePosition = 0, u64QPC = 0;

DWORD dwFlags = 0;

UINT32 nFramesToRead = 0;

LPBYTE pData = NULL;

hr = m_Capture->GetBuffer(&pData, &nFramesToRead, &dwFlags, &u64DevicePosition, &u64QPC);

CopyCaptureFrames(pData, &pFrameBuffer);

hr = m_Capture->ReleaseBuffer(nFramesToRead);

// Schedule a new work item only after releasing the buffer

hr = MFPutWaitingWorkItem(m_hCaptureCallbackEvent, 0, m_CaptureCallbackResult.Get(), &m_CaptureCallbackKey);

If there are some glitches in the audio capture, audio callback can be delayed or even frames skipped. There are various reasons why these would happen, but you should be aware of these happening and know how to handle these in your code.

Every time you call GetBuffer you will be able to receive some additional flags, device position and clock data. In shared mode you can test for the AUDCLNT_BUFFERFLAGS_DATA_DISCONTINUITY flag to detect a gap in the capture stream. The device position is the frame index of the capture buffer start into the capture stream. So if you have a capture initialized with periodicity of 480 frames, the first capture will report device position 0, second 480, third 960 etc. Note that if you stop the stream and start again, the device position counter will not reset. You can explicitly call IAudioClient::Reset on a stopped stream. Also, do not assume that the first device position will always be zero because some transient processes that settle after stream start can cause a gap right at the start of the stream.

You can capture the device position and store it and compare it to the next value retrieved. The difference has to equal to the capture buffer size or else there are frames dropped.

C++

nMissedFrames = u64DevicePosition – u64LastDevicePosition – nCaptureBufferFrames;

if (nMissedFrames > 0)

{

// Missed frames

There is another timing variable captured–there is a high precision counter in Windows (see QueryPerformanceCounter). The u64QPC variable will contain a value from this counter converted to 100ns units. The value is relative and has no relation to application, capture, or system startup time. What you can do though is store the value and calculate how much time has passed since the last callback. If the difference is largely different from the intended capture buffer length (in time) then you have a callback delayed. When rendering captured data in real time you need to be aware of not queuing up audio frames that arrive late as this will build up a through latency.

Any data that you miss to read from the buffer is lost–there are no error values or flags to indicate that you have missed to read some data.

Render

Writing render data to the buffer happens through the IAudioRenderClient interface, which is very similar to the capture interface in that you get the pointer to the buffer you need to write to through calling IAudioRenderClient::GetBuffer. Then you write the data to the buffer and call ReleaseBuffer. The only difference is that the GetBuffer version of a render client does not provide timing and device positioning data. If you require time and position information, you should use the IAudioClient::GetService to retrieve interface for IAudioClock interface and use that to retrieve audio clock data.

When running render stream in a push (non-event driven) mode you do not need to write the whole buffer length of data, but you can do this in fragments. What is important is that you call IAudioClient::GetCurrentPaddingbefore writing data to render buffer–it will tell you how much valid unread data current the render buffer contains. So at maximum you can write buffer-length padding frames to the buffer.

C++

UINT32 nPadding = 0;

HRESULT hr = m_AudioClient->GetCurrentPadding(&nPadding);

UINT32 nFramesAvailable = m_nRenderBufferFrames - nPadding;

EnterCriticalSection(&m_RenderCriticalSection);

LPBYTE pRenderBuffer = NULL;

hr = m_Render->GetBuffer(nFramesAvailable, &pRenderBuffer);

CopyRenderData(pRenderBuffer,pRenderSource);

hr = m_Render->ReleaseBuffer(nFramesAvailable, 0);

In an event-driven callback you should always write the whole buffer, and the padding value is useless. Also note that if you need to render silence then you can omit copying data to the buffer and specify the flag AUDCLNT_BUFFERFLAGS_SILENT in the call to ReleaseBuffer. The audio engine then treats the data packet as though it contains silence regardless of the data values contained in the packet.

Putting it all together

When coding your app, you’ll need the following include files:

- Audioclient.h – Definitions for WASPI interfaces

- mmdeviceapi.h – Definition for ActivateAudioClientAsync

- mfapi.h – Media Foundation functionality

- wrl/implements.h, wrl/client.h – WRL templates for smart COM pointer and interface implementation templates

For linking you should include mmdevapi.lib and mfplat.lib. You can do so by including the following pragma statements:

C++

#pragma comment (lib,"Mmdevapi.lib")

#pragma comment (lib,"Mfplat.lib")

When writing an app like this it can be difficult to debug any problems. A memory leak in one callback, for example, might cause a crash in another place. Also if there is no immediate crash but the code does not behave as needed it is hard to understand the patterns, as the callback and processing is dependent on many conditions and get executed rapidly and repeatedly. To minimize all kinds of memory bugs it is a good idea to use common practice for safe C++ code–smart pointers and the standard template library–as much possible.

Different functions will return values in different measures that have variable size units, so it is very important to keep track of that. Use best coding practices like including the unit in the variable name (nCapturedBytes, nCapturedFrames).

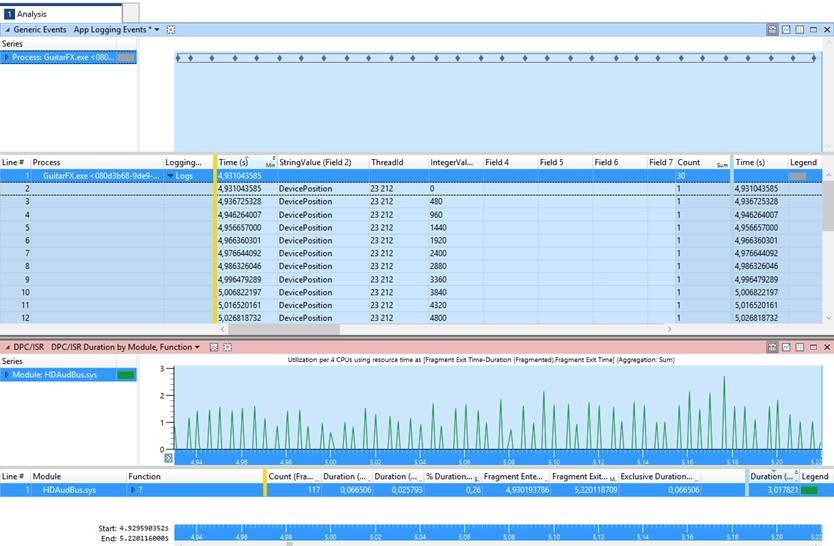

To understand the pattern of execution, it is useful to use the event tracing that is built into Windows. Event tracing is a low overhead functionality which has public GUI tools to analyze traces.

The simplest way to use event tracing is probably to use functionality that is built into the Windows::Foundation::Diagnostics namespace:

C++

// Static global variable

Windows::Foundation::Diagnostics::LoggingChannel ^g_LoggingChannel;

// Initialize during the startup of the application

g_LoggingChannel = ref new Windows::Foundation::Diagnostics::LoggingChannel("MyChannel");

// You can now log an event

g_LoggingChannel->LogMessage("OnCaptureCallback");

g_LoggingChannel->LogValuePair("DevicePosition", devicePosition);

To capture events you can now run trace tools like perfview.exe (download PerfView) or performance recorder/analyzer (download as part of Windows Performance Toolkit). Events logged using the Windows::Foundatiation::Diagnostics APIs will appear under the provider name Microsoft-Windows-Diagnostics-LoggingChannel, so make sure you include that when collecting trace.

Here is a sample output of trace events where for each capture callback capture device position value has been written to the log which is then viewed together with the HD Audio bus DPC/ISR events.

Sample

To illustrate this post, there is a sample available at https://rtaudiowsapps.codeplex.com/ which implements a digital delay effect.

Links

- User-Mode Audio Components

- Stream Latency During Playback

- Stream Latency During Recording

- WASAPI

- Exclusive Mode Streams

- Device Formats

- Multimedia Class Scheduler Service

- Using Work Queues

- Work Queue and Threading Improvements

- Measuring DPC/ISR Time

- Multimedia Win32 and COM APIs for Windows Store Apps

- Windows Hardware Certification Requirements

- XAudio2 Introduction