While our measurements of quality show improving trends on aggregate for each successive Windows 10 release, if a single customer experiences an issue with any of our updates, we take it seriously. Today, I will share an overview of how we work to continuously improve the quality of Windows and our Windows as a Service approach. As part of our commitment to being more transparent about our approach to quality, this blog will be the first in a series of more in-depth explanations of the work we do to deliver quality in our Windows releases.

Critical to any discussion of Windows quality is the sheer scale of the Windows ecosystem, where tens of thousands of hardware and software partners extend the value of Windows as they bring their innovation to hundreds of millions of customers. With Windows 10 alone we work to deliver quality to over 700 million monthly active Windows 10 devices, over 35 million application titles with greater than 175 million application versions, and 16 million unique hardware/driver combinations. In addition, the ecosystem delivers new drivers, firmware, application updates and/or non-security updates daily. Simply put, we have a very large and dynamic ecosystem that requires constant attention and care during every single update. That all this scale and complexity can “just work” is key to Microsoft’s mission to empower every person and every organization on the planet to achieve more.

Windows 10 marked a change in how we develop, deliver and update Windows: What we call “Windows as a Service.” We shifted the responsibility for base functional testing to our development teams in order to deliver higher quality code from the start. We also changed the focus of the teams that still report to me who are responsible for end-to-end validation, and added a fundamentally new capability to our approach to quality: the use of data and feedback to better understand and intensely focus on the experiences our customers were having with our products across the spectrum of real-world hardware and software combinations. This combination of testing, engagement programs, feedback, telemetry, real-life insight across complex environments and close partner engagement proved to be a powerful approach that enabled us to focus our feature innovation and monthly updates to deliver improved quality.

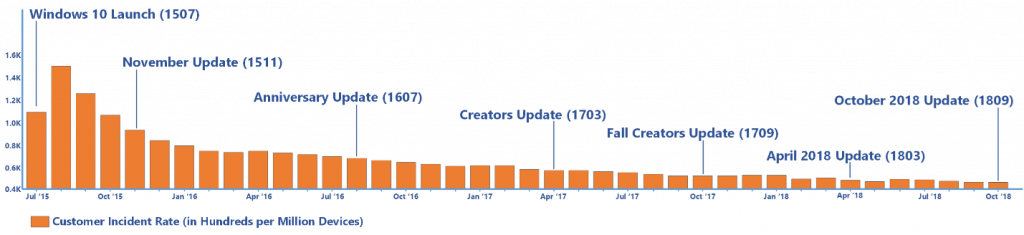

This data-driven listening approach has also allowed us to track our quality differently. Over the last three years one of our key indicators of product quality – customer service call and chat volumes – has steadily dropped even as the number of machines running Windows 10 increased. Another key indicator we track is the Net Promoter Scores (NPS), where we ask people to rate their Windows experience and track the ratio of “promoters” and “detractors.” Today, the Windows 10 April 2018 Update has the highest Net Promoter rating of any version of Windows 10. While we are encouraged by these improving quality trends at scale, we also understand that the trend doesn’t matter if you happen to be one of the people experiencing an issue. Our goal is to provide everyone with only the best experiences on Windows, and we take all feedback seriously. We are committed to learn from each occurrence, and to rigorously apply the lessons to improve both our products and the transparency around our process.

Continued product quality improvement trend: declining customer incident rate

Testing in Redmond

Our approach to product quality begins by listening to customers and representing their feedback in all aspects of our development process. First, we invest in customer and partner planning feedback to help us shape and frame our product specifications, including defining both required testing and success metrics. We employ a wide variety of automated testing processes as we develop features, allowing us to detect and correct issues quickly. We are regularly looking to address gaps in tests and often find them based upon our internal experiences and issues we note with our insiders. This suite of automated tests grows over time. The most fundamental of these tests must pass for features and code to “integrate up” into the main Windows build that will eventually ship to customers. In a future blog we’ll detail the extensive testing we do in-house, but it is safe to say that testing is a key part of delivering Windows.

Internally, Windows has what we call an aggressive “self-host” culture. “Self-host” means that employees working on Windows run the latest internal versions on their machines to ensure they are living with Windows. The “aggressive” part refers to the tenacious push to make sure local teams run their own builds and pursue any issues found. A strong self-host culture is a source of pride for those of us working on Windows.

Engaging our partners

Given the breadth of our shared ecosystem, testing goes well beyond our Redmond campus and extends around the world to dedicated Windows test labs as well as the facilities of our many partners. In this way we validate in-market and in-development releases at scale through arrangements with key testing partners including:

- External testing labs with global, continuous coverage for application compatibility, hardware and peripherals

- ISVs for a range of apps including Anti-Virus (AV)

- OEMs partner with us to test and ensure quality across a vast array of systems, devices and drivers

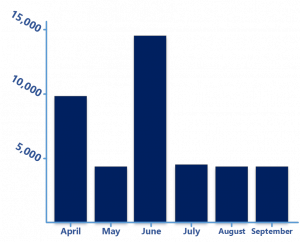

Our approach to the driver ecosystem alone will make for an interesting blog post in this series. High-quality device drivers are key to a great experience, and we must engage closely with our hardware partners to deliver drivers at scale. The chart below shows the driver volume we can face month to month, with June peaking to almost 15,000 drivers delivered into the ecosystem!

Monthly driver volume via Windows Update

Engaging our customers

Delivering a quality product requires that we engage customers to provide feedback on our designs and plans. That is why we created the Windows Insider program at the beginning of Windows 10. Beyond the valuable insights Insiders share about the experience, the Windows Insider program further expands the volume and diversity of device usage that we cannot obtain in our own controlled environments. With Windows Insiders we gain fresh insights and feedback on user experience, compatibility, performance and more. Insider populations are balanced between pre-release and release preview versions that receive cumulative quality updates for drivers and many applications.

We also engage with commercial customers through many programs. The Windows Insider Program for Business allows organizations to access Insider Preview builds to validate apps and infrastructure ahead of the next public Windows release. The program helps IT professionals give us feedback on the features they use to deploy and manage Windows in an organization. The program has grown by 43 percent in the past six months, and we just introduced Olympia v2, which provides a complete Microsoft 365 deployment and management testing environment. We also have an invitation-only program for large enterprise customers, the Technology Adoption Preview (TAP) program, that lets customers provide early feedback on product updates via real-world product testing to help us identify issues during the development process.

During development and stabilization of an update, we use all our engagement programs to identify and fix issues that often only emerge in real-life settings. We also continue automated and manual testing, as do our partners across the ecosystem. We compare quality to previous flights and releases based on all the feedback tools available to us including Feedback Hub and social media. When we are confident in the user update experience, we begin to cautiously release a feature update to our customers.

Data-driven decisions making

One of the most critical advances we have made in our approach to quality is to continually improve the data-driven decisions we can make about the reliability of our product in-development and in-market. We begin by using detailed dashboards and metrics to evaluate the builds that we install daily on our own PCs, and only when we have clear evidence that measurable quality is at an acceptable level do we begin to start sharing flights for feedback with Windows Insiders. Our dashboards and metrics then scale to the volume of quality data and feedback we receive once a product is being flighted or is in-market. We obsess over these metrics as we strive to improve product quality, comparing current quality levels across a variety of metrics to historical trends and digging into any anomaly. Our data-driven approach is implemented with the highest standards of data privacy protection for our valued customers; you can learn more about that via our Privacy at Microsoft page.

Rollout principles

Part of our “Windows as a Service” evolution is that we do not “ship” Windows the same way we did before Windows 10. We leverage our real-time detection and response capabilities to roll out Windows in a careful and data-driven way, and this represents some of the most impactful changes we have made to improve the Windows experience.

The first principle of a feature update rollout is to only update devices that our data shows will have a good experience. One of our most recent improvements is to use a machine learning (ML) model to select the devices that are offered updates first. If we detect that your device might have an issue, we will not offer the update until that issue is resolved.

Second among our principles is to start slowly – to prioritize the update experience over rollout velocity. When a new feature update release is available, we first make it available to a small percentage of “seekers,” users who take action to get the updates early.

Third, we monitor carefully to learn about new issues. We do this by watching our telemetry, closely partnering with our customer service team to understand what customers report to us, analyzing feedback logs and screenshots directly through our Feedback Hub, and listening to signals sent through social media channels. If we find a combination of factors that results in a bad experience, we create a block that prevents similar devices from receiving an update until a full resolution occurs. We continue to look at ways to improve our ability to detect issues, especially high-impact issues where there is low volume and potentially weak signals. To improve our capability to recognize low-volume issues, we recently added the ability for users to indicate the impact or severity of issues when they provide us feedback.

Responsive and transparent

Even a multi-element detection process will miss issues in an ecosystem as large, diverse and complex as Windows. While we will always work diligently to eliminate issues before rollout, there is always a chance an issue may occur. When this happens, we strive to minimize the impact and respond quickly and transparently to inform and protect our customers. Our focus until now has been almost exclusively on detecting and fixing issues quickly, and we will increase our focus on transparency and communication. We believe in transparency as a principle and we will continue to invest in clear and regular communications with our customers when there are issues. Of course, we have a responsibility to protect customers, and in some cases (e.g. zero-day exploits) we prioritize that protection over transparency until security updates are released and we can again be clear about an issue.

Just the beginning…

We are working on many fronts to ensure our customers have the best, most secure experience on Windows. While we do see positive trends, we also hear clearly the voices of our users who are facing frustrating issues, and we pledge to do more. We will up our effort to improve our ability to prevent issues and our ability to respond quickly and openly when issues do arise. We intend to leverage all the tools we have today and focus on new quality-focused innovation across product design, development, validation and delivery. We look forward to sharing more about our approach to quality and emerging quality-focused innovation in future posts.